This article will help a non-technical person understand natural language processing and its importance for helping your page rank better in the search engines (SEO)

Already know about Bots and SERPs? Skip to Natural Language Processing.

What Search Engines Do On A Web Page?

In simple terms, a bot (a piece of scanning software) crawls a web page capturing the content (words) and other information about the page.

Then, an algorithm (mathematical weighting formula) is applied to the captured content from the page, and a rank is assigned to that page for any given keyword in comparison with other pages.

When someone searches, Google or whatever search engine spits out these ranked results.

(Of course, other elements are present in the algorithm like the number of high authority pages that link to a page, but for today we are dealing with on-page issues).

Enter Natural Language Processing

Natural language processing (NLP) uses artificial intelligence to help computers understand, interpret, and manipulate the intricacies of human language.

NLP has the capabilities to analyze the syntax of a page to understand things like sentence content and the context of your phrasing.

NLP can analyze:

- the syntax of a page

- sentence structure

- word usage in context

- and more

NLP is able to determine whether or not a piece of content is well written — from a topical and grammatical standpoint.

Search engines rely on NLP to help them understand what any given page is about. They can then match the page to a searcher’s intent. The better the match, the higher the page will rank for that term (theoretically).

The search engine has to decipher the meaning and intent of the words on a page.

NLP is vital for search engines because individual words can have multiple meanings, many of them unrelated.

An Easy To Understand Example

Take the word diamond.

Let’s use it in a sample sentence…

The diamond was clear.

The word diamond can have multiple meanings:

- a geometric shape

- a gemstone

- a field on which baseball is played

The sentence above might have a search engine leaning towards gemstone. But that might be incorrect. There isn’t a lot of contextual information.

But what if additional entity words were located in the sentence or on our sample page to provide the Google search engine algorithm more clues?

The rain delay ensured the diamond was clear of players.

Other known entities are introduced:

- rain delay

- players

Remember, we are not talking about random words on the page, but entities. A Google patent describes an entity as a thing or concept that is singular, unique, well-defined and distinguishable. Yes, entities can be nouns (a person, place or thing), but also an adjective, concept, or even an idea (blue, etc.)

By cataloging known entity words and, more importantly, known entity phrases and defined entities that tend to occur with each type of diamond, the bot can see if those occur on a given page. This helps the search engine’s algorithm determine which kind of diamond is intended. Now it would lean away from the gemstone meaning.

Let’s include even more defined entities into the sentence…

Hank Aaron’s grand slam ended the game, and the diamond was clear of its previous base runners.

We now have more entity words and phrases:

- Hank Aaron

- grand slam

- game

- base runners

Now, NLP allows the search engine to clearly disambiguate what type of diamond is being referenced – a field on which baseball is played.

There is no ambiguity. Even though it’s using machine learning, the search engine knows this is definitely a BASEBALL DIAMOND.

While this sounds simple for people to do, Natural Language Processing is quite a difficult feat. It requires artificial intelligence, machine learning, and massive amounts of data storage and computing horsepower.

The earliest phases of NLP used only single words in its comparisons. But now with powerful methodologies like BERT and ELMo (hello, Sesame Street), the ability to gather common phrases is becoming massively more important. Phrases provide more context than isolated words.

How Do We Use NLP to our Advantage in SEO?

In SEO, we are dealing with a bot and an algorithm. Human quality assessors do grade pages and give them quality scores, but it’s rare and they inform the algorithm rather than hand ranking pages. Most of what is decided about ranking occurs in the world of bots & algorithms.

The words on a website’s page provide key signals to the bot and algorithm to help them understand our page’s intended topic. The more we help the search engine reduce the confusion, the better potential our page has to rank.

According to Slawek Czajkowski, one of Surfer’s co-founders, NLP is not only helpful in determining the quality of content but also the trustworthiness as well.

“Google is the first place people go to search for advice on a multitude of topics.

The algorithm’s job is to provide legitimate, relevant results, especially when it comes to the most sensitive and vulnerable topics like health and money (YMYL).

To get the best results…

It’s better to be pro-active rather than reactive.

To overcome the issue with unreliable information, it is important to keep low quality, untrustworthy content away from the top 10 search results. Let’s imagine people looking for a healthy, balanced diet, are served with highly commercial content offering magic pills with unreliable claims like a promise to ‘Lose 20 pounds of weight in 1 week.’

Thanks to NLP, Google’s algorithm can determine the meaning, sentiment, and the overall bigger picture.

Don’t get me wrong, NLP isn’t directly related to the E-A-T concept, but it helps to evaluate the intention, meaning, and quality of every piece of content.”

Here are more information and examples of NLP content optimization.

How do we do this? One way is to…

Match the Best Ranking Pages with Page Tuning

Use Common Entity Phrases

One of the things that works very well when optimizing a page is to examine the common entity phrases that the top ranking pages use.

These will typically be phrases that are entity related.

The phrase and then holds little disambiguating potential in most cases But a phrase like pitcher’s mound is a known entity.

Once we ascertain these top common entity related phrases, we can reverse engineer Google’s algorithm to see which phrases it considers essential to be included on a web page to help to clarify the topic of the page and the meaning of surrounding words.

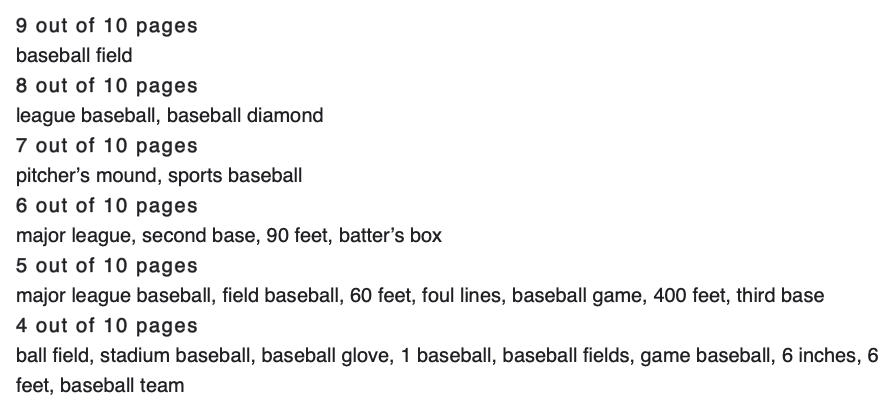

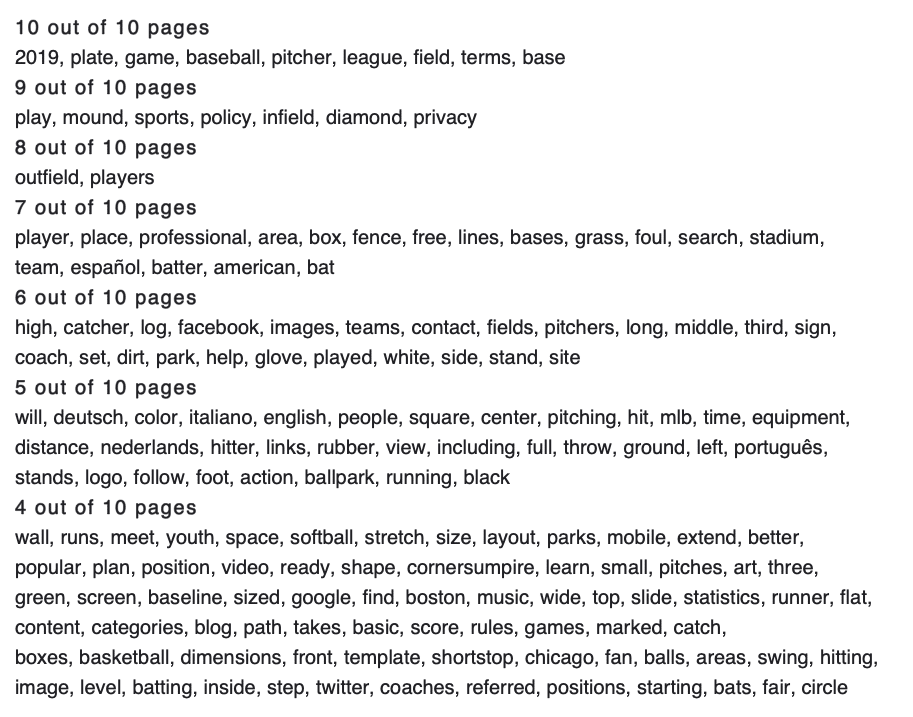

For example, if we want to notate that our diamond means baseball diamond, these are common phrases the top 10 pages use.

We ascertain these common phrases through powerful page tuning software that does a comparative analysis.

It would be wise to ensure these common phrases are woven into our article.

Common phrases are a newer method of NLP. Previously, scanning and storing common phrases and all the mathematical combinations required tons of processing horsepower and storage.

We used to use a program that looked at only common words between the top-ranking pages. With the newer page tuning software available, we can now look at common phrases. This is a better approach.

Use Common Words

While common phrases are now given more importance, common entity words can also help with NLP and SEO rankings. Here are the common words used in articles about:

Using NLP on Key Elements of the Page for Better Rankings

We can also use software to see if the main keyword, a variant of that keyword, or partial keywords are used in elements of the high ranking pages. Then, we can reverse engineer our page.

Example: Is ball field used in a sub-header and if so what level of sub-header and how many times?

Example: is diamond used with another word in an image alt tag on the top-ranking pages?

Example: is the keyword used in the page title tag and, if so, is it also used in the URL and the meta description or are variants used?

If we find these to be the case in the top-ranking pages, then logic would suggest we should also include these phrases in similar elements on our page.

Word Count and SEO: How it Affects NLP

We know that long-form content is currently ranking better than shorter content in a majority of cases, but why?

Two reasons, one technical and one user-focused. Let’s start with the technical.

Technical Reasons

The more words that are on a page, the more disambiguating common entity phrases and entity words–related terms can be included on a page. A page with 1,500 has a lot more opportunity to use the words above in a natural way than a page with 100 words.

These words do need to be incorporated into the page in the manner a typical human would use them in writing.

Copy and pasting the list of common phrases at the bottom of your post isn’t going to work because the search engine’s algorithm is smart enough to know that’s not natural usage, and it is going to flag it quickly. There is a reason it is called Natural Language Processing.

(Interesting SEO history: Years ago, long before NLP, when all the algorithm could do was raw word matches, web developers used to stuff a page with keyword variants. Some even put tons of the keyword in white font on white backgrounds so the bot would see it but not the human page viewer. That definitely doesn’t work anymore because of NLP.)

When there are a lot of expected matches of phrases and entities, the algorithm’s NLP capabilities say, “Oh, look at all these nice matches. We can clearly tell what this page is about.”

This tends to push the page up in rankings.

End-User Reasons

The second reason is the user benefits from more comprehensive articles than thin content. Google knows this and tends to reward longer-form content.

A search engine would rather send the user to a single page that answers all of his or her questions than have the searcher have to flitter around to 5 different pages seeking the answer.

If the experience of getting an answer is made difficult on a particular search engine, the searcher may ultimately abandon that engine for a different one which they perceive offers better results.

Objectifying Word Count

The good news is you don’t have to guess how many words your page should contain.

We use tuning software to see the word count on each of the top-ranking pages and compare your page’s length to the top-ranking pages.

The best practice is to match the word count on the pages in the top 10 (or those that rank above us if you are in the Top 10 already.)

Of course, there might be off-page elements (strong backlinks, etc.) affecting rankings allowing a 200-word page to rank or a 19,000-word article to perform poorly. We are speaking in on-page general terms. With the tuning software, we can quickly see these type pages as outliers and ignore them in our tuning recommendations.

“But I Don’t Want Long Pages, Those Seem Ugly to Me”

We hear this all the time.

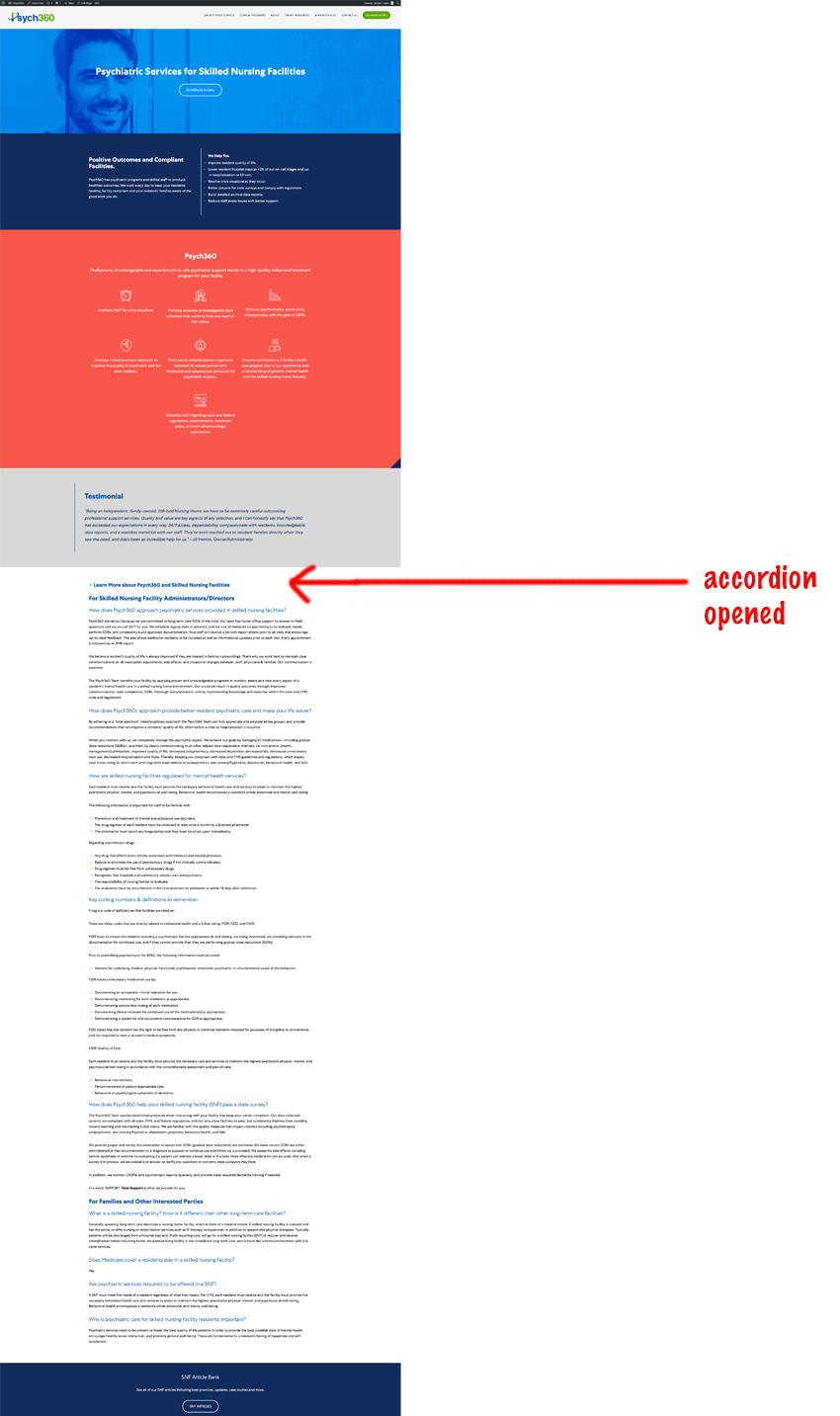

Enter the accordion–a beautiful design feature.

This longer form of content can be placed in an accordion that gets clicked open by the user.

But even if it isn’t clicked open, the bot still crawls all of it in the source code of the page. (Don’t believe us? Click here to see that they do.)

Note, this is NOT junk content. We never advocate filler or write junk content.

It’s just content that is not AS IMPORTANT as the copy that sits on the main section of the page. That should be conversion first copy. The accordion copy is for those folks who want to research more or like more details.

This is definitely not the white text on white background approach. We aren’t trying to game the system, but simply put the most important content in the most visible place on the page.

By using dynamic accordions in the CSS code, your pages appear more concise upon a visitor’s first visit.

Designing with accordions delivers the best of both worlds, cleanly designed pages with longer forms of content related to the searcher’s queries.

Page Tuning using Natural Language Processing Works To Improve Your Rankings

Moon and Owl regularly and systematically tune clients’ pages on their site. We have consistently seen a rise in ranking performance on the tuned pages.

We sometimes perform wholesale tuning on a page if we feel it is heavily under-optimized or overly-optimized and it isn’t ranking in the top 20.

For pages in the top 20, we go at a more conservative rate, tuning one element at a time (body content, sub-headers, alt-tags) and watch how each tuned element affects the page’s ranking.

We keep careful notes, to help us understand the results to inform future page tunings on that site.

This scientific approach, combined with the volume of page tuning we do, allows Moon and Owl to develop a prioritized list of tunings to apply. We are constantly retesting to make sure the order of the tunings is correct.

In the future, we’ll be presenting how we use Natural Language Processing and Entity in structured data Schema markup, anchor texts, and other key elements to get your site more easily found, liked and trusted by potential customers.

If you would like help with page tuning using natural language, on-page SEO, or search engine optimization in general, we would love to help you. Give us a call for an SEO audit or ongoing help.